Industries & Use Cases

Learn about the innovative ways the Cesium platform is used across industries.

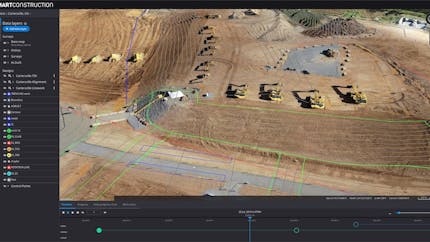

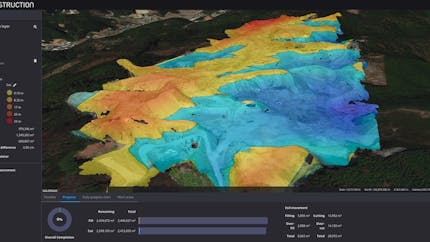

AEC

Cesium is helping architecture, engineering, and construction experience a boom in project productivity, efficiency, and cost savings.

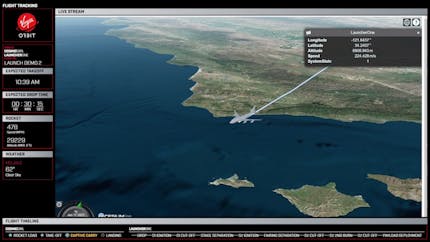

Aerospace

Cesium's roots are in aerospace, and today the platform still brings precise 4D visualization and simulation to air and space.

Digital Twins

Create accurate and stunning 3D virtual worlds with Cesium.

Digital Twins for Defense

High-quality 3D visualization and runtime performance, advanced analytics, and data interoperability in the cloud or self-hosted.

Drones

Bring your 3D drone data into Cesium and stream it to digital twins and apps.

Federal/Defense

Cesium is the foundational open platform for building 3D geospatial applications that support mission-critical operations.

Flight Operations

Build powerful flight planning and simulation apps.

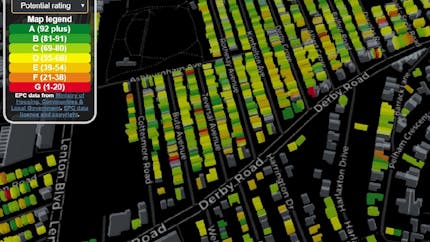

Smart Cities

Create accurate digital twins with Cesium by combining real-time sensor data and 3D imagery with Cesium global 3D content.

Smart Spaces and IoT

Visualize and analyze IoT data in 3D geospatial context.

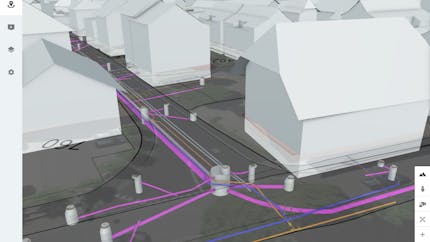

Underground and Undersea

Build apps that explore and analyze subsurface spaces, structures, terrain, and data.

Your Industry

Cesium partners with vision-aligned organizations positioned to bring digital transformation through the application of 3D geospatial technology.