Procedural Terrain Generation with Noise Functions

This is a guest post by Rudraksha Shah about the work he and Rishabh Shah contributed to Cesium as part of a project for CIS 700: Procedural Graphics at the University of Pennsylvania. -Sarah

The geometry that Cesium renders is based on the camera position. When the camera moves closer to an object, a higher resolution mesh is rendered. Cesium gets geometry as pre-modeled tileset data to render at different levels of detail. Our goal was to develop an algorithm that would not require pre-modeled data but that could procedurally generate terrain with incremental level of detail. The terrain generated by the algorithm should also remain consistent across different levels of detail (LOD). Cesium fetches tiles with geometry data from a server. So we created a web server to provide the generated content.

Noise

We used multi-octave noise to generate terrain, and we used multi-octave noise and Voronoi diagrams to texture it.

Terrain contains large details like mountains and smaller details like rocks. Multiple noise-based height fields are added one on top of another, with varying amplitudes and frequencies (by sampling the noise domain), to achieve the overall detail. This is called multi-octave noise.

For generating same noise values at different levels of details, we require a pseudo-random noise function that gives the same noise value at a point in space based on the position of the point. This gives the same mountains and valleys, but with different levels of details as the camera moves closer to or away from the tiles. This kind of noise is called lattice noise, as the space is divided into a 3D grid, and the lattice points on the grid are used to determine the noise values. We take the points on the 2D plane and for each point generate the noise value. This noise value is then used to offset the vertex by some amount, and then finally we map these transformed vertices to the 3D sphere.

Our approach

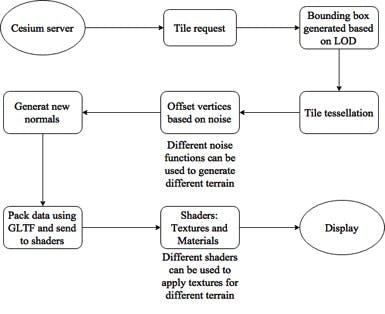

When a tile is requested from the server, we need to first determine the bounding box for the tile, subdivide the region inside the bounding box into a 3D grid for lattice noise with appropriate level of detail, and generate the vertices conforming to the height field.

Our approach.

This is achieved as follows:

- Generate a bounding box: Four corners of the tile are determined based on the position of the tile.

- The corners are offset using 3D multi-octave lattice noise. Here, we also compute the maximum and minimum height for the bounding box encompassing the tile.

- While generating the bounding box, the distance of the camera is also taken into account. The closer the camera, the greater the number of octaves. This configuration helps in determining a tighter fit bounding box.

The distance metric based on the four corners of the bounding box is not sufficient to generate a tight bounding box; we also need to estimate and add the geometric error. There could be points inside the tile for which the height value may exceed the maximum or minimum bounds, so in order to estimate the geometric error we go three depths deep; i.e., we calculate the geometric error for k+3 octaves, where k = octaves.

Error = pow(persistence,k+1) + pow(persistence,k+2) + pow(persistence,k+3)

Generating the bounding box.

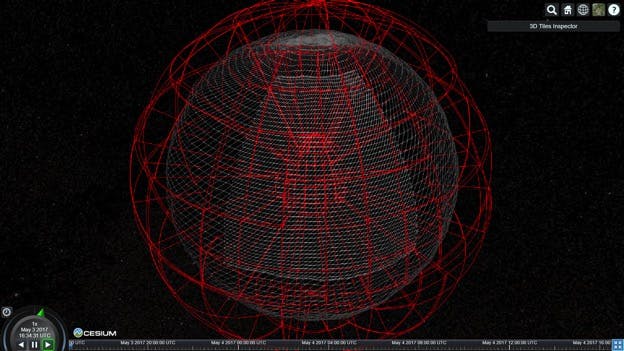

- Subdivide Tile: Once we have the bounding box for the tile, we subdivide the tile in a 9x9 grid. This grid, which is created in 2D space and mapped to the geo sphere, is used for mesh generation. The 9x9 is just used initially for the least LOD. As the LOD increases, the dimensions also increase, thus providing more mesh to generate the terrain on.

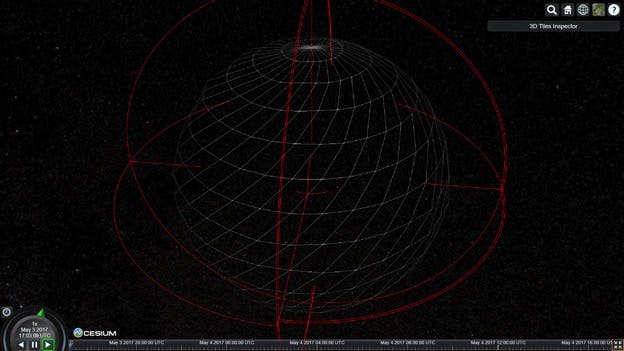

- Level-of-Detail: The closer the mesh is to the camera, the more tiles will be rendered, and the more tiles there are, the more subdivided mesh will be to render the terrain on.

Level-of-detail.

Adding textured appearance.

Final application

Terrain Generation