Cesium for Autonomous Driving Simulation

During the GDC and GTC conferences, Cesium received a lot of attention for autonomous driving simulation and visualization.

Autonomous driving systems will require several billion miles of test driving to prove that they’re better drivers than humans. A fleet of 20 test vehicles can cover only a million miles in an entire year. There are 4.12 million miles of roads in the US alone.

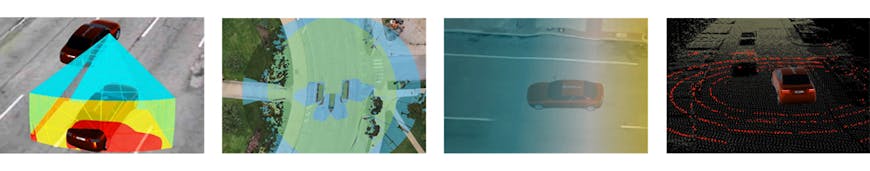

Simulation improves testing efficiency, safety, and comprehensiveness. Vehicles capture the environment with LIDAR, cameras, and other sensors, and then this environment is used to simulate combinations of different scenarios:

- Various vehicle sensor configuration

- Autonomous driving software updates and bug fixes

- HD maps

- Weather conditions

- Lighting conditions

- Traffic patterns

- Pedestrian patterns

- Road conditions, construction, etc.

In addition to simulation, autonomous cars can also benefit from visualizing logs and real-time data from autonomous vehicle road tests. What is the vehicle's behavior and environment? When did the vehicle accelerate or stop? When did its sensors disagree with its HD map? What is the state of the autonomous fleet currently on the road?

In-dash visualization is also used to inform the test driver and passengers about the autonomous operation, and, in the future, will be used for entertainment, navigation, and situational awareness.

Why Cesium for Autonomous Driving?

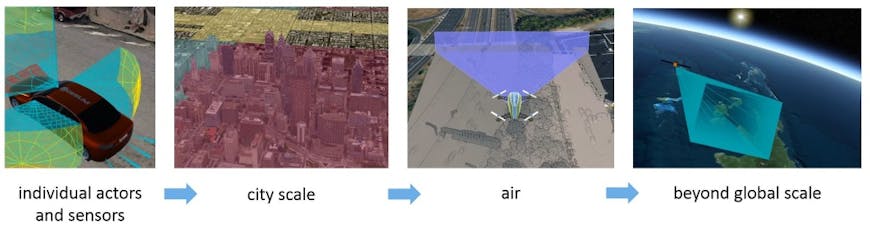

As a horizontal 3D map platform, Cesium has been used across verticals including drones, AR, A&D, and BIM.

From massive heterogeneous dataset streaming to high-precision rendering to time-dynamic simulation, Cesium has built up a feature set and developer ecosystem that forms a flexible foundation for the unique autonomous driving challenges outlined below.

1. Massive amounts of data

An autonomous vehicle can easily collect 4 TB of data per day, including 40 MB of camera frames per second, 70 MB of LIDAR points per second, and time-varying telemetry from GPS, gyroscopes, and accelerometers.

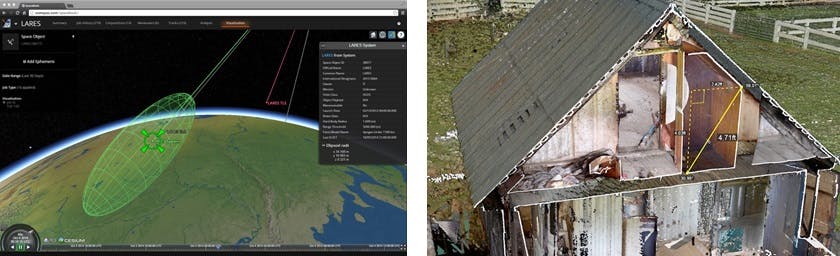

Real-time visualization and log playback of this data requires efficient streaming of time-dynamic data. Cesium's open time-dynamic format, CZML, is designed for this, including batching several frames into windows like streaming video, originally created for aerospace use cases.

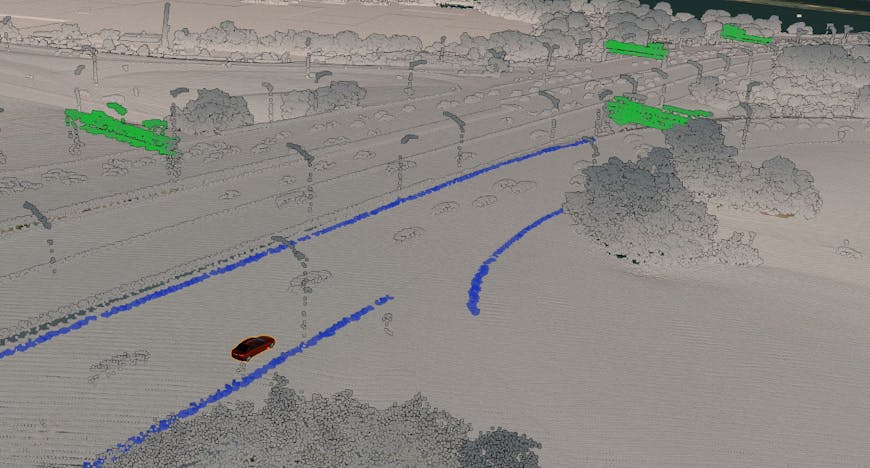

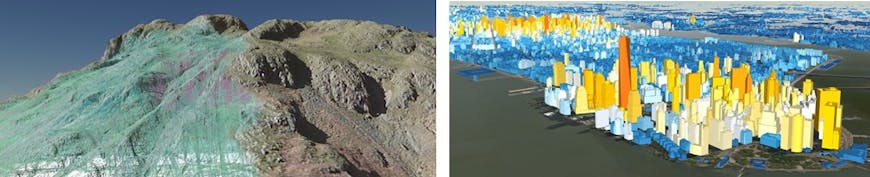

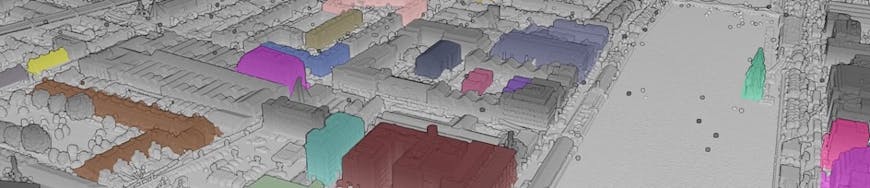

Recreating a virtual environment from the collected photogrammetry (from cameras) and LIDAR data requires the ability to stream massive heterogeneous 3D geospatial datasets. Cesium's open 3D Tiles is designed for exactly this—streaming only the part of the 3D model needed for the current virtual view and keeping the rest of the data in the cloud.

Point cloud of I395 outside Washington, DC.

Less than 1% of the full point cloud 3D tileset!

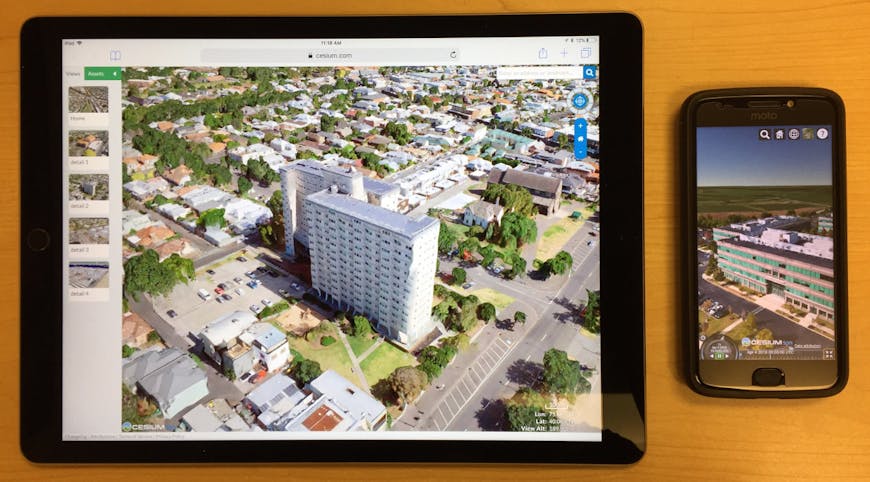

Given the massive amount of telemetry and environment data collected, users do not want to have to download an entire log database to play back an autonomous vehicle road test ... or, even worse, imagine doing this for a fleet of 20 vehicles at 4 TB each. Since Cesium is 100% web-based, it runs in a browser on any device using WebGL, from phones to tablets to laptops, and streams from the cloud only the data currently needed for the simulation.

2. Flexibility and interoperability

Cesium is uniquely focused on visualization, which allows others in the autonomous driving industry to focus on AI and other areas and easily leverage Cesium for visualization.

Car manufacturers use a combination of software and hardware that is developed in-house and by third-parties. All of these components must interoperate with each other via well-defined—and ideally open—APIs and formats.

CesiumJS has a very flexible API with more than 450 top-level entry points that let developers control every detail from the brightness of the map to the orientation of a vehicle to the shade of the atmosphere. Since CesiumJS is open-source, developers have access to modify any part of the code and even contribute back code as more than 100 developers have already done.

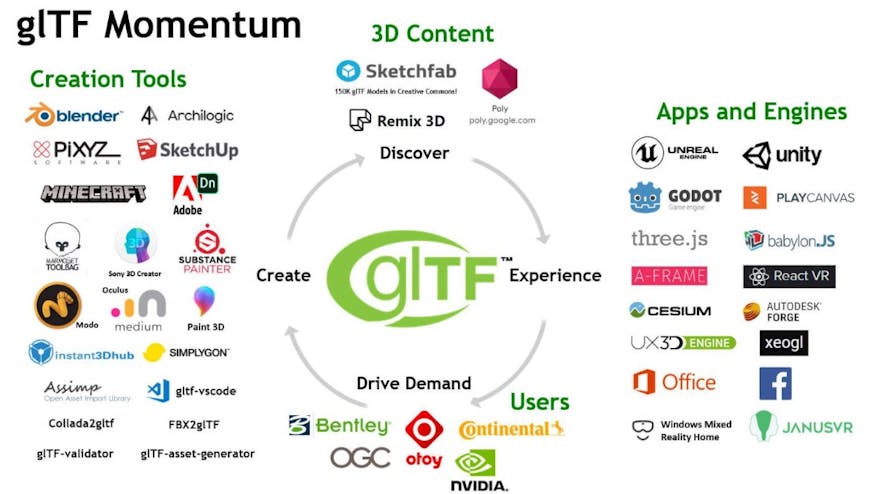

The formats Cesium uses are open, widely supported, and optimized for 3D runtime. The Cesium team is the creator of 3D Tiles, a candidate OGC Community Standard, and co-creators of glTF, an open Khronos standard used by Google, Microsoft, Facebook, and others. These formats form the foundation for streaming individual 3D models, such as cars, as well as massive environments, such as point clouds and photogrammetry, on the web.

The commercial Cesium ion content pipeline provides 3D tiling for point clouds, photogrammetry, 3D buildings, and other massive environments using 3D Tiles allowing developers to mix and match interoperable components.

3. High precision rendering

Autonomous driving requires accuracy—ideally sub-cm accuracy—for the safest and most precise measurements.

Originally built for aerospace, Cesium was designed ground-up for high-precision rendering both for individual vertex position transforms and for massive view distances. For example, Cesium has been used to track every satellite in space and for sub-cm 6.4 billion-point point clouds. With Cesium you could see the view from inside a car to a distant mountain to the International Space Station ... seriously!

Terrain and 3D buildings from geospatial data sources.

With Cesium, you can fuse data collected from multiple autonomous vehicles into one virtual environment and augment that with geospatial data such as terrain, imagery, 3D buildings, and vector data. These data may be captured by drones, aircrafts, satellites, surveys, or provided as open data from governments.

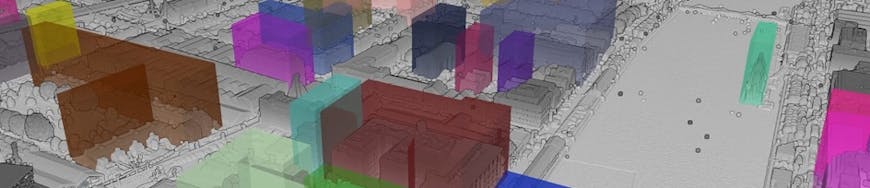

Separate vector (boxes) and point cloud tileset.

Applying the vector tileset to classify the point cloud.

This local environment is accurately globally georeferenced in Cesium's 3D globe.

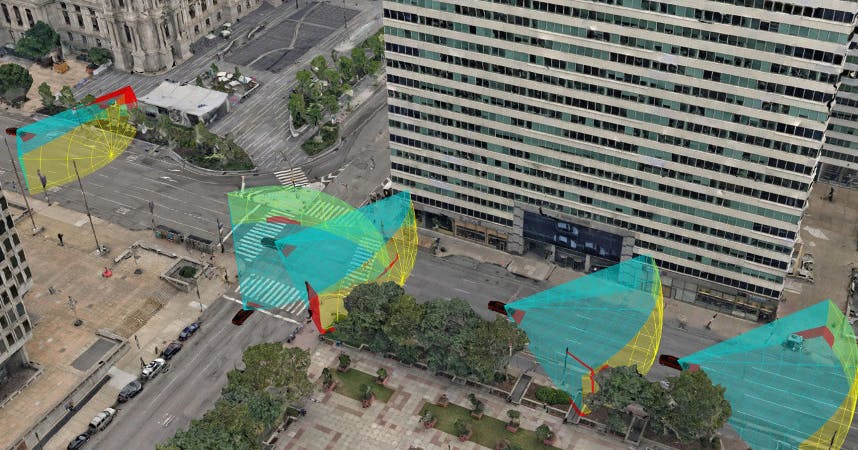

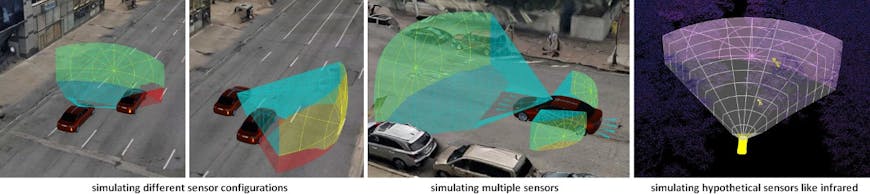

5. Simulating vehicle and sensor performance

Adding a new sensor or reconfiguring a sensor array can significantly impact what a vehicle can "see." Likewise, different traffic and pedestrian patterns and weather and lighting conditions further impact visibility. Simulation provides an efficient means of determining this visibility for a given route and environment.

The commercial Cesium ion SDK provides GPU-accelerated line of sight, viewshed, and visibility analytics to efficiently and easily understand the impact of sensor visibility.

What's next?

As the autonomous driving simulation and visualization world quickly evolves, we are excited to talk to car manufacturers, sensor manufacturers, and others in this field. Feel free to say hi.