Cesium Metal Renderer Design for Apple Platforms

This is a guest post by Ryan Walklin about using the Metal renderer with Cesium. - Sarah

Cesium is an open-source WebGL-based virtual globe and geospatial visualization library, built with Javascript. It runs extremely well on desktop-class hardware in a browser, but more performance can be gained on mobile by using native languages and APIs.

I started porting Cesium to iOS last year, initially using Objective-C, but switched to Swift once the language and compiler began to mature.

The renderer design was initially a near-direct port from WebGL to OpenGL ES 2.0. Since WebGL is a close-to-strict subset of GL ES, this was relatively straightforward. Swift is also syntactically much closer to Javascript, which eased porting effort.

The main challenge during this process was moving from the dynamic type system of JS to the statically typed Swift, requiring a few formal structs and enums in places to replace ad-hoc JS objects.

However, once I had implemented the basic rendering engine and the quad-tree tiling system for terrain and imagery, I was a little bit disappointed with the single-threaded performance, particularly once multiple imagery layers and horizon views were tested. Performance rapidly became bound by draw call and uniform setting overhead, and I started looking at Metal as an alternative.

Metal Basics

I won’t rehash the excellent information over at Warren Moore’s site, which was invaluable when I was implementing the renderer, but I’ll add a few more words about the challenges during the process.

Metal is a low-overhead 3D rendering API for Apple’s A7+ SOC iOS devices, and more recently for current Intel, Nvidia, and AMD GPUs on OS X 10.11+.

Broadly, this is achieved by the removal of extensive state-checking and validation in favor of predefined renderer states, which are rapidly switched between during drawing, and a deferred rendering execution model where commands are encoded on the CPU, then bulk-delivered to the GPU per-frame.

iOS devices keep buffers and textures, which are merely specialized buffers, in shared memory between CPU and GPU. The graphics engine rather than the driver is then responsible for maintaining buffer coherency. On devices with dedicated GPU memory, this requires buffer syncing semantics between CPU and GPU memory, although this can be done efficiently at frame boundaries.

iOS 9 and OS X 10.11 bring further improvements to Metal with the addition of MetalKit. This provides the MTKView class, which simplifies Metal-backed view creation and render callbacks. I haven’t worked with the MTKTextureLoader. Model I/O or Metal Performance Shader tools so won’t comment on these.

Compiled Render Pipeline State

For most general 3D rendering, the vertex and fragment shaders and vertex buffer descriptor used to draw each geometry don’t change from frame to frame, so Metal optimizes shader program usage by precompiling shaders and vertex buffer layout into a MTLRenderPipelineState object.

This also stores some renderer states previously stored in the Cesium RenderState object, such as color mask and blending. The resulting pipeline objects are then cached, making subsequent state switching very rapid.

Shared CPU/GPU memory for buffers and textures

The benefits of lower driver overhead for maximizing draw-calls per frame have been widely publicized, but a less-obvious but significant advantage is support for uniform buffers and out-of-loop texture and vertex uploads.

Metal buffers are untyped memory collections that can be used for vertex or uniform information. They are provided to draw calls as attachments, and the structure is given by a MTLVertexDescriptor object for vertices and as input and output structs in the case of uniforms.

This makes manipulation much easier, as setting uniforms and vertices becomes a simple memcpy() call into a CPU-based buffer rather than needing to set and upload each uniform individually.

CesiumKit Metal Renderer Design

The basic design of Cesium’s renderer is detailed here and here. The first iteration on iOS was a straight port from WebGL to OpenGL ES 2.0 as supported by iOS.

Within the initial OpenGL ES renderer port, as with most non-trivial GPU engines, several state objects are created and managed to facilitate efficient GL state changes. With Metal, much of this is packaged once into compiled MTLRenderPipelineState and MTLRenderPassDescriptor, as described above.

Consequently the RenderState object, which contains information about the current renderer pipeline, and the PassState object, which contains information about the current renderer pass, have been repurposed into descriptor objects that wrap the predefined state. These can be created or set from the Scene level of the framework and then passed into the renderer to configure renderer passes.

A new RenderPipeline object, which wraps a compiled MTLRenderPipeline including compiled shaders and vertex attribute information. Switching state is then as simple as specifying the required pipeline when creating a RenderPass.

This also means that executing draw commands within the renderer is simplified, as a lot of the state that was previously set per-call in the GL renderer can be set or is precompiled into a RenderPass.

A RenderPass stores the MTLPassDescriptor object and other pass-specific information, and also packages up render commands and provides them to a MTLRenderCommandEncoder, which passes them to the GPU.

A MTLCommandEncoder, which stores draw commands for a render pass, is created or committed using a RenderPass object and underlying MTLRenderPassDescriptor, with load and store actions for each drawable attachment specified using the loadAction and storeAction properties.

These actions correspond to the OpenGL clear command. Each texture attachment can be cleared if required at the start of a render pass, or the initial state ignored for efficiency if all the pixels in the texture will be written during the pass. At the end of the pass the texture can be stored (for a color texture used as a render target) or discarded in the case of depth or stencil textures.

The concept of an OpenGL framebuffer is replaced by an object conforming to the MTLDrawable protocol, typically a texture. Rendering to the screen is managed by the system via a MTKView, which provides a UIView backed by a drawable texture. The MTKView drawable has attachments for color, depth, and stencil (or combined depth/stencil in iOS 9/OS X 10.11) textures.

For offscreen rendering (rendering to texture: Metal has no render buffer concept), the previous GL Framebuffer becomes a simple struct containing color, depth, and stencil textures.

The overall flow of the render() call in the Metal renderer context remains much the same, with a pre-render pass using GPGPU for off-screen texture reprojection required to convert Web Mercator-projected textures to plate carrée to wrap onto the globe. Texture and vertex uploads are moved to background threads.

Essentially the actions required to render a frame are

- Obtain the next valid MTLDrawable from the MTKView (which contains the color texture for final rendering).

- Create a MTLCommandBuffer to accept render commands.

- Create a MTLCommandEncoder to represent each render pass, with a RenderPass (MTLRenderPassDescriptor) for each one to handle color and depth attachment clearing as required.

- For each draw call, set the pre-compiled RenderPipeline (MTLPipelineState), vertex, and uniform buffers and texture objects.

- Call drawIndexedPrimitives().

- Commit the command encoders, which triggers GPU frame processing.

The system has only a limited pool of drawable textures (as they are at screen resolution, which can be quite large for a Retina iPad display), so the initial call to obtain a drawable is protected by a semaphore to prevent the renderer getting too far ahead of itself.

Metal Shading Language (MSL)

The other challenge in porting is that Cesium’s shaders are written in GLSL (OpenGL Shading Language) whereas Metal has its own C++-based language called Metal Shading Language.

Luckily, the Unity engine developers have created a very useful extension to their excellent GLSL optimizer, which will cross-compile GLSL shaders to MSL.

This tool is primarily written for offline use; however, it’s written in very portable C++ so I was able to write a simple Objective-C++ wrapper and generate a framework, which is callable from Swift. This runs on the device, and rewrites and optimizes the GLSL shaders generated by the existing Cesium shader pipeline into MSL code, as they are required to package into the renderer pipeline state objects. This can also be done in the background. A few patches have been made to support Cesium-specific shaders, and these can be found in the GitHub repository above.

Generated Metal shaders can then be compiled either offline as part of application compilation by Xcode, or online from source. There are command-line tools for compilation but they seem to only be able to compile for a single device class.

The best performance is obtained if shaders are compiled offline, but in a renderer like Cesium’s where shaders can vary at runtime to account for changes in lighting or scene mode, online compilation is required. This can still be done out-of-core, as Metal supports deferred compilation, which will fire a callback when they are ready for use. The resulting GPU function pointers can then be cached.

Here’s a comparison of example GLSL vertex and fragment shaders to draw a viewport-aligned quad, with their corresponding metal versions generated by the optimizer:

Vertex Shader - GLSL

attribute vec4 position;

attribute vec2 textureCoordinates;

varying vec2 v_textureCoordinates;

void main() {

gl_Position = position;

v_textureCoordinates = textureCoordinates;

}

#include <metal_stdlib>

using namespace metal;

struct xlatMtlShaderInput {

float4 position [[attribute(0)]];

float2 textureCoordinates [[attribute(1)]];

};

struct xlatMtlShaderOutput {

float4 gl_Position [[position]];

float2 v_textureCoordinates;

};

vertex xlatMtlShaderOutput xlatMtlMain (xlatMtlShaderInput _mtl_i [[stage_in]]) {

xlatMtlShaderOutput _mtl_o;

_mtl_o.gl_Position = _mtl_i.position;

_mtl_o.v_textureCoordinates = _mtl_i.textureCoordinates;

return _mtl_o;

}

struct czm_material

{

vec3 diffuse;

float specular;

float shininess;

vec3 normal;

vec3 emission;

float alpha;

};

uniform vec4 color;

czm_material czm_getMaterial() {

material.alpha = color.a;

material.diffuse = color.rgb;

return material;

}

varying vec2 v_textureCoordinates;

void main() {

czm_material material = czm_getMaterial();

gl_FragColor = vec4(material.diffuse + material.emission, material.alpha);

}

#include <metal_stdlib>

using namespace metal;

struct xlatMtlShaderInput {

};

struct xlatMtlShaderOutput {

float4 gl_FragColor;

};

struct xlatMtlShaderUniform {

float4 color;

};

fragment xlatMtlShaderOutput xlatMtlMain (xlatMtlShaderInput _mtl_i [[stage_in]], constant xlatMtlShaderUniform& _mtl_u [[buffer(0)]]) {

xlatMtlShaderOutput _mtl_o;

float4 tmpvar_1;

tmpvar_1.xyz = _mtl_u.color.xyz;

tmpvar_1.w = _mtl_u.color.w;

_mtl_o.gl_FragColor = tmpvar_1;

return _mtl_o;

}

The only significant incompatibility between GLSL and MSL is the latter’s lack of support for texture arrays. This is used in Cesium’s globe surface tile shader to provide a list of imagery tiles to render to a single terrain tile in the case of overlapping imagery and terrain.

This was solved trivially by replacing the texture array with individually indexed textures (u_dayTexture0, u_dayTexture1, …) in the GLSL shader, which the cross-compiler happily accepts.

Advantages

The main advantage is rendering speed. Both a faster API and better multithreading have enabled far more consistent rendering, at 60fps in most cases. Background resource upload and processing are a particularly significant benefit for an app like Cesium, which relies on streaming in terrain and imagery data as the globe is navigated.

The use of buffers for uniform storage also has the potential for significant performance benefits, as these can be set once and reused each frame.

Another small advantage is that Metal supports wireframe-based rendering with one simple call, which greatly simplifies debugging.

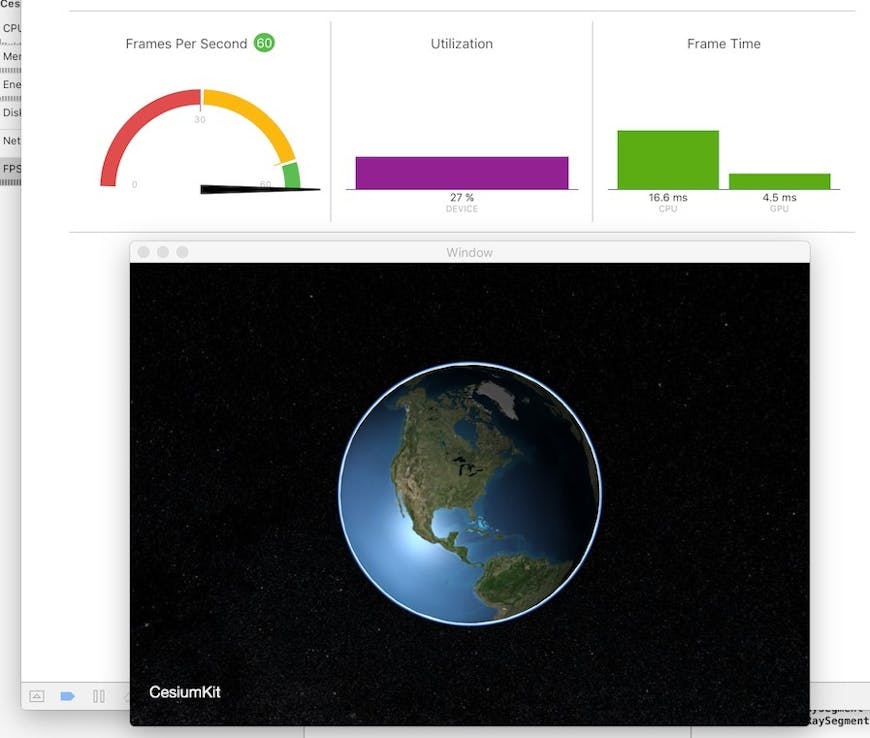

The Metal System Trace tool allows visualization of the CPU and GPU activity across frame rendering.

Disadvantages

Obviously this has required a rewrite of the existing GL renderer, and a loss of backward compatibility with older devices.

This has become less of an issue given that OS X now supports Metal, although following the open-sourcing of Swift and subsequent Linux implementation, an OpenGL renderer implementation may remain desirable for Linux support. It should be relatively straightforward to abstract out the changes to the renderer design to support both OpenGL and Metal backends.

It took about five months, from May to October 2015, although this was only a part-time endeavor, and this included getting texturing and texture reprojection working, as well as an OS X target, so likely to be much quicker if you’re working fulltime.

This has become less of an issue given that OS X now supports Metal, although following the open-sourcing of Swift and subsequent Linux implementation, an OpenGL renderer implementation may remain desirable for Linux support. It should be relatively straightforward to abstract out the changes to the renderer design to support both OpenGL and Metal backends.

The Renderer layer in Cesium does largely encapsulate the implementation details of Metal vs. OpenGL/WebGL, so minimal changes should be needed going forward as more of the Scene and DataSource layers are implemented.

Compatibility

iOS 9.0

- A7/A8 processor and newer

OS X 10.11 - Nvidia GeForce GTX 400 series and newer (may require Nvidia Web drivers)

- Intel HD4000 and newer (Ivy Bridge or newer)

- AMD HD7000 and newer

Performance

All tests run on an iPad Air 2 running iOS 9.2.1 (13D15) at retina resolution. Builds compiled with -O and Whole Module Optimisation, and run with Metal API validation disabled. TileCoordinateImageryProvider used to eliminate network effects. Lighting, atmosphere, terrain, depth textures, skybox, support for oriented bounding box culling volumes, and FXAA are all disabled as these aren’t supported in the GL renderer currently. CPU utilization is out of 300% (the A8X is a triple-core design).

Static globe view (26 tiles rendered):

| FPS | CPU% | GPU Frame Time (ms) | |

|---|---|---|---|

| Metal | 60 | 53% | 1.8 |

| GL ES | 60 | 66% | 2.6 |

| FPS | CPU% | GPU Frame time (ms) | |

|---|---|---|---|

| Metal | 60 | 103% | 3.7 |

| GL ES | 29 | 91% | 2.8 |

| FPS | CPU% | GPU Frame time (ms) | |

|---|---|---|---|

| Metal | 60 | 101% | 2.7 |

| GL ES | 58 | 85% | 3.1 |

| FPS | CPU% | GPU Frame time (ms) | |

|---|---|---|---|

| Metal | 60 | 71% | 2.2 |

| GL ES | 58 | 77% | 2.9 |

This is a slightly unfair comparison, as Metal allows the use of buffers to store uniforms, which is a feature of GL ES 3.0 and in the upcoming WebGL 2. However, the Metal renderer still resets all uniforms each frame, which is an obvious target for improvement.

As you can see, the OpenGLES 2.0 renderer keeps pace with the Metal renderer during static rendering of a low number of tiles, and generally performs reasonably in the dynamic tests with limited tiles rendered, although the effect of more efficient command upload and processing in the Metal implementation can be seen in the reduced per-frame CPU render time.

The Metal renderer pulls away when rendering a horizon view with significantly more visible tiles and therefore commands to execute per frame. As the number of draw calls per frame increases, the OpenGL renderer becomes progressively more CPU-bound. The GPU is clearly starved for work during OpenGL rendering as the GPU frame time remains low.

The CPU utilization of 91% for GL ES while drawing the horizon view suggests that the CPU is saturated, as the GL renderer is single-threaded. That limits it to 29fps (in that particular scenario). Since Metal is more efficient at encoding the draw calls, it can hit 60fps without saturating the renderer thread, and is using (some) threading, which is why utilization is greater than 100%.

Optimizations

Even with uniform buffers, setting uniforms still occupies a significant proportion of the time spent in the render loop. I’m looking at optimizing this both by reducing the number of uniforms set per frame (most of which don’t often change and can be set once when the draw command is created), and by potentially multithreading uniform setting by factoring it out of draw command execution. Early work on this has shown performance benefits of 25-50%, particularly on complex horizon views.

Tangential to graphics is the much improved support for SIMD (Single Instruction Multiple Data) instructions in Swift 2. This enables much better matrix and vector performance, which Cesium relies heavily on for geometry. I’ve reimplemented Cesium’s vector and matrix types to be backed by SIMD, and will be looking at other opportunities to use SIMD for geometry generation and other performance hotspots as development continues.

There is also much more that could be done to improve performance with the generally improved multithreading capability inherent to native code, and more specifically with Metal’s support for background resource loading and multithreaded command queuing.

I’ve implemented FXAA anti-aliasing; however, this is associated with a significant performance hit, at least on iOS, and I plan on looking at optimizations.

I’m also hoping to fully implement Kai Ninomiya’s excellent work on rectangular bounding volumes soon for greater efficiency with horizon views. There is partial support already.

Future

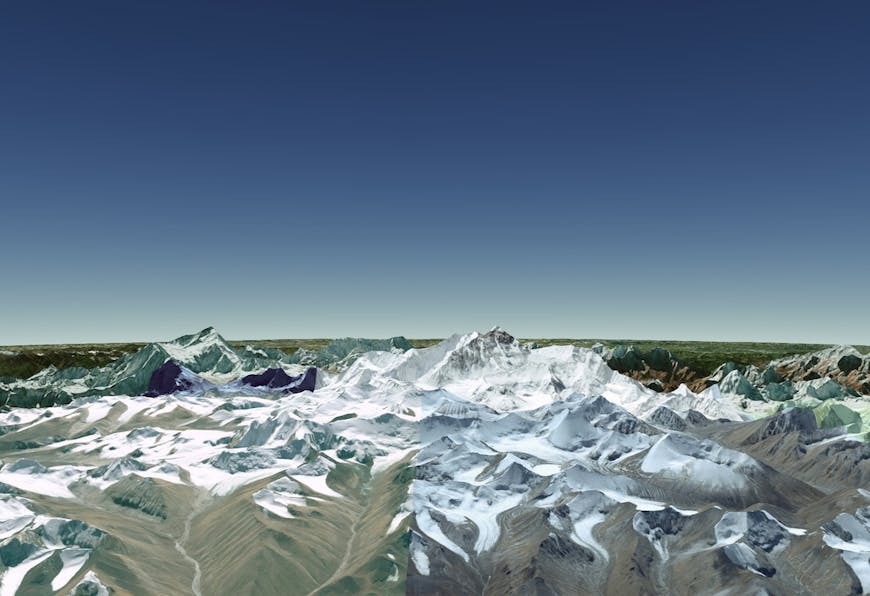

The Metal renderer as it stands can draw the base globe with multiple imagery layers, perform Web Mercator reprojection, and render terrain, atmosphere, lighting, and animated water effects.

There is a text-renderer based on Warren’s implementation, although this needs more support at higher abstraction levels and does not quite align with Cesium’s current Label and Billboard types.

The Cesium project is not just a pretty globe, but also a vast set of tools implemented upon the 2D and 3D maps to visualize geospatial data. Now that the basics are in place, the focus can shift to bringing over more of these tools to the CesiumKit port.

Going forward implementing more of the DataSources layer will allow geometry and other features to be drawn on the globe as in Cesium’s WebGL renderer. The ViewportQuad type has been ported with a simple Color Material as an example.

Conclusion

On iOS in particular, the limitations of OpenGL’s state machine and threading model limit performance for a CPU-bound streaming renderer like Cesium’s. Using the new Metal renderer provides significant performance benefits and will allow much richer and more complex applications to be built using CesiumKit on iOS.

The performance benchmarks comparing the Metal and OpenGL ES renderers confirm the benefits of low draw call overhead in the new API, with far more draw calls possible per-frame without affecting performance.

Where can I get it?

The code is available on GitHub. Build instructions are in README.md, and are largely plug and play, although there is a first-time build script to run to pull down the Alamofire, PMJSON, and GLSLOptimizer dependencies.

The current source contains both OS X and iOS library and test app targets and requires iOS 9 or OS X 10.11 and Xcode 7.2 with Swift 2.0. I’ve been using the Xcode 7.3 and Swift 2.2 betas.

Have fun!