Graphics Tech in Cesium - Rendering a Frame

This article traces through Cesium’s Scene.render to explain how Cesium 1.9 renders a frame using its WebGL renderer. Put a breakpoint in Scene.render, run a Cesium app, and follow along.

Given Cesium’s focus on visualizing geospatial content, scenes with many different light sources are not common so Cesium uses a traditional forward-shading pipeline. Cesium’s pipeline is unique because it uses multiple frustums to support massive view distances without z-fighting artifacts [Cozzi13].

Setup

Cesium stores constants with the lifetime of a frame in a FrameState object. At the start of the frame, this is initialized with values such as the camera parameters and simulation time. The frame state is made available to other objects, such as primitives that generate commands (draw calls), throughout the frame.

UniformState is part of the frame state with common precomputed shader uniforms. At the start of the frame, uniforms such as the view matrix and sun vector are computed.

Update

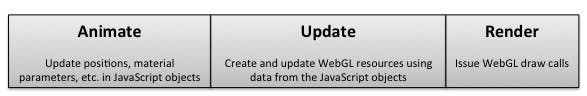

Cesium has a classic animate/update/render pipeline, where the animate step may move primitives (Cesium’s term for objects that can be rendered), change material properties, add/remove primitives, etc., without interacting with WebGL. This is not part of Scene.render, it may happen in application code by explicitly setting properties before a frame is rendered or it may happen implicitly in Cesium by assigning time-varying values using the Entity API.

A classic animate/update/render pipeline.

The first major step for Scene.render is to update all the primitives in the scene.

In this step, each primitive

- Creates/updates its WebGL resources, i.e., compile/link shaders, upload textures, update vertex buffer, etc. Cesium never calls WebGL outside of Scene.render since doing so can throw off the timing of requestAnimationFrame and would make it hard to integrate with other WebGL engines.

- Returns a list of DrawCommand objects that represent a draw call and reference WebGL resources created by the primitive. Some primitives, like a polyline or billboard collection, may return a single command; where as other primitives, like the globe or a 3D model, may return hundreds of commands. Most frames will be a few hundred to a few thousand commands.

The Globe, which is Cesium’s terrain and imagery engine, is a primitive. Its update function handles hierarchical level of detail and culling, and out-of-core memory management for loading terrain and imagery tiles.

The Potentially Visible Set

Culling is a common optimization that graphics engines use to quickly eliminate objects that are out of view so the rest of the pipeline doesn’t have to process them. The objects that pass the visibility test are the Potentially Visible Set and continue down the pipeline. They are potentially visible because an inexact conservative visibility test is used for speed.

Cesium supports automatic view frustum and horizon culling [Ring13a, Ring13b] for individual commands (primitives, such as Globe that perform their own culling, can disable this) using the commands’ world-space boundingVolume.

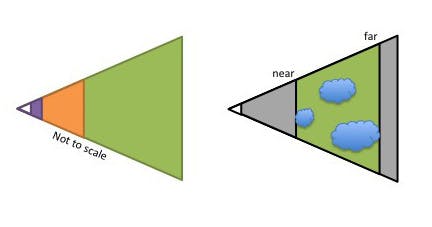

A traditional graphics engine could find the potentially visible set by checking the visibility test for each command. Cesium’s createPotentiallyVisibleSet goes a step farther and dynamically divides the commands into multiple frustums (usually three) that bound all commands and mantain a constant far-to-near ratio to avoid z-fighting. Each frustum has the same field of view and aspect ratio, only the near and far plane distances are different. As an optimization, this function exploits temporal coherence and will reuse the frustums computed last frame if they are still reasonable for this frames’ commands.

Left: multiple frustums. Right: commands in a frustum

Render

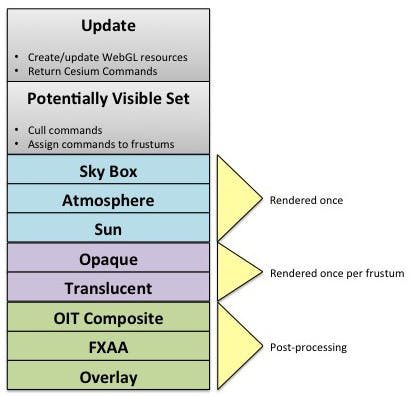

Given the list of frustums, each with a list of commands, we are now ready to execute commands, which will result in calls to WebGL’s drawElements/drawArrays. Follow along in Cesium’s executeCommands as this is the heart of Cesium’s rendering pipeline.

First, the color buffer is cleared. If Order-Independent Transparency (OIT) [McGuire13, Bagnell13] or Fast Approximate Anti-Aliasing (FXAA) are used, their buffers are also cleared (more on these below).

Then, a few special-case primitives are rendered using the entire frustum (not one of the individual computed frustums):

- Sky box for the stars. An old-school optimization was to skip clearing the color buffer by rendering the sky box first. Today, this can actually hurt performance since clearing the color buffer helps maximum GPU compression (same for clearing depth). The best practice is to render the sky box last to take advantage of early-z. Cesium renders the sky box first because it has to since depth is cleared after each frustum as described below.

- Sky atmosphere. A basic atmosphere from [ONeil05].

- Sun. If the sun is visible, the sun billboard is rendered. If the bloom filter is also enabled, the sun is scissored out, and then several passes are rendered: the color buffer is downsampled, brightened, blurred (in separate horizontal and vertical passes), and then upsampled and additively blended with the original.

Next, starting with the farthest frustum, the commands in each frustum are executed with the follow steps:

- Frustum-specific uniform state is set. This is just the frustum’s near and far distances.

- The depth buffer is cleared.

- Commands for opaque primitives are executed first. Executing a command sets up the WebGL state such as render state (depth, blending, etc.), vertex array, textures, shader program, and uniforms, and then issues the draw call.

- Next, translucent commands are executed. If OIT is not supported due to lack of floating-point textures, the commands are sorted back-to-front and then executed. Otherwise, OIT is used to improve visual quality for intersecting translucent objects, and to avoid the CPU overhead of sorting. The commands’ shader is patched for OIT (and cached), and rendered in a single OIT pass if MRT is supported, or two passes as a fallback. See OIT.executeCommands.

Using multiple frustums leads to some interesting cases like commands can be executed more than once if they overlap more than one frustum. See [Cozzi13] for full details.

At this point, the commands for each frustum have been executed. If OIT was used, the final OIT composite pass is executed. If FXAA is enabled, a fullscreen pass is executed for anti-aliasing.

Similar to a Heads-Up Display (HUD), commands for the overlay pass are executed last.

Cesium's current rendering pipeline.

Sorting and Batching

Within each frustum, commands are guaranteed to be executed in the order the primitives returned them. For example, Globe sorts its commands front-to-back to take advantage of GPU early-z optimizations.

Since performance is often dependent on the number of commands, many primitives use batching to reduce the number of commands by combining different objects into one command. For example, BillboardCollection stores as many billboards as possible in one vertex buffer and renders them with the same shader.

Picking

Cesium implements picking using the color buffer. Each pickable object is given a unique id (color). To determine what is picked at a given (x, y) in window coordinates, a frame is rendered to an offscreen framebuffer where the colors written are the pick ids. Then, the color is read using WebGL’s readPixels, and is used to return the picked object.

The pipeline for Scene.pick is similiar to Scene.render, but is simplified since, for example, the sky box, atmosphere, and sun are not pickable.

Future Work

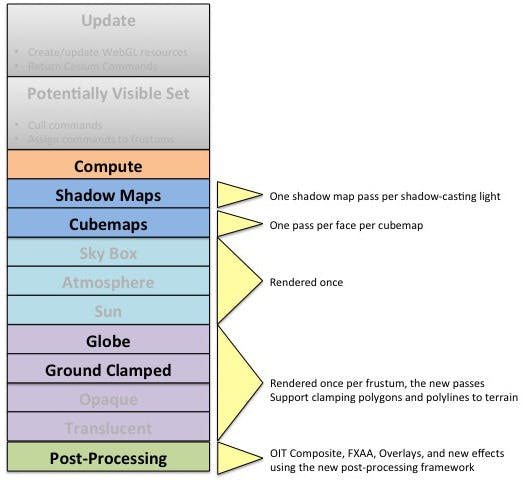

There’s several in-progress and planned improvements to how a frame is rendered.

Ground Pass

The passes in Scene.render described above are common among graphics engine: OPAQUE, TRANSLUCENT, then OVERLAY. OPAQUE is actually broken down into GLOBE and OPAQUE. This is likely to be expanded so that the order is: the base globe, vector data clamped to the ground, and then general opaque objects. See #2172.

Shadows

Shadows will be implemented with shadow mapping. The scene is rendered from the perspective of each shadow-casting light, and each show-casting object contributes to a depth buffer or shadow map, which is the distance to each object from the perspective of the light. Then, in the main color pass, each shadow-receiving object checks the distance in each light’s shadow map to see if its fragment is inside a shadow. A production implementation is quite involved and needs to address aliasing artifacts, soft shadows, multiple frustums, and Cesium’s out-of-core terrain engine. See #2594.

Depth Textures

A subset of adding shadows is adding support for depth textures that, for example, can be used for depth-testing billboards against terrain and reconstructing world-space position from depth.

WebVR

Another part of adding shadows is the ability to render the scene from different perspectives. WebVR support can be built on this. The standard camera and frustum is used for culling and LOD selection, and then two off-center frustums, one for each eye, are used for rendering. NICTA’s VR plugin has a similar approach but uses two canvases.

Cubemap Pass

Yet another extension from shadows is the ability to render cubemaps, i.e., six 2D textures forming a box that describe the environment around a point in the middle of the box. Cubemaps are useful for reflection, refraction, and image-based lighting. Cubemap passes can get expensive so I suspect this will only be used sparingly for on-the-fly generation.

Post-Processing Effects

Scene.render has a few post-processing effects hardcoded such as sun bloom, FXAA, and even compositing for OIT. We plan to create a generic post-processing framework that takes textures as input, runs them through one or more post-processing stages which are basically fragment shaders running on a viewport-aligned quad, and then output one or more textures. This will replace much of the hardcoded Sun bloom, for example, with data that drives the post-processing framework, and open up many new effects such as depth of field, SSAO, glow, motion blur, and so on. See these notes.

Compute Pass

Cesium does old-school GPGPU for GPU-accelerated imagery reprojection where it renders an off-screen viewport-aligned quad to push the reprojection to the shader. This could be done with the post-processing framework during a compute pass at the start of a frame. See #751.

A potential future Cesium rendering pipeline (new stages in bold).

Acknowledgments

Dan Bagnell and I wrote most of the Cesium renderer. For entertainment, see our notes of the Cesium wiki. Ed Mackey did the original multi-frustum implementation at AGI in the 90s when I was still in high school.

References

[Bagnell13] Dan Bagnell. Weighted Blended Order-Independent Transparency. 2013

[Cozzi13] Patrick Cozzi. Using Multiple Frustums for Massive Worlds. In Rendering Massive Virtual Worlds Course. SIGGRAPH 2013.

[McGuire13] McGuire and Bavoil, Weighted Blended Order-Independent Transparency, Journal of Computer Graphics Techniques (JCGT), vol. 2, no. 2, 122–141, 2013

[ONeil05] Sean O’Neil. Accurate Atmospheric Scattering. In GPU Gems. Edited by Matt Pharr and Randima Fernando. 2005.

[Ring13a] Kevin Ring. Horizon Culling. 2013.

[Ring13b] Kevin Ring. Computing the horizon occlusion point. 2013.