Synchronized HTML5 Video with GPS Data

YouTube is littered with first person helmet camera videos of every kind. In fact a quick search turned up approximately 800,000 videos. They’re great at showing some high-intensity action up close and personal. The problem with helmet cam videos is that they lack the ability to convey any information about the bigger picture. Where exactly was the mountain that the cliff jumper was diving off of in that awesome video I just watched? How fast was he going when he deployed his parachute? Where did he land, and how large was the landing area? These are really interesting questions, but there’s generally no way to get this kind of information from the video.

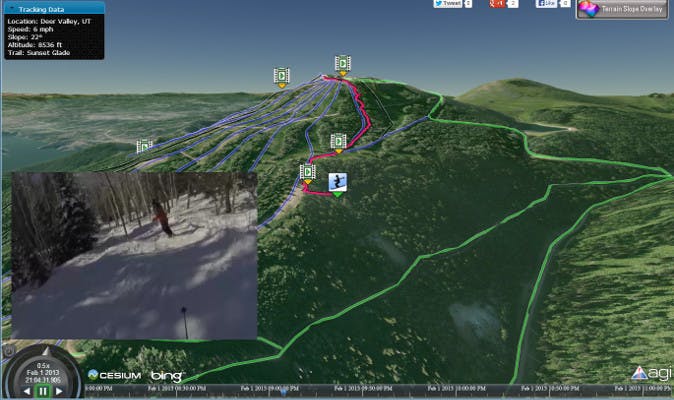

With this in mind, I set out to do something a little different. Armed with a GoPro helmet camera and a GPS tracking app (Google My Tracks) for my Android phone, a friend and I headed out to the Rocky Mountains in Utah for some alpine skiing fun. We recorded lots of video and about 25 hours of GPS tracks. With all this data and Cesium, I had everything I needed to build a simple app that combined the micro perspective captured with the helmet camera with the macro perspective that comes from GPS information. I called the project Powder Tracks.

Cesium is used to show the skier’s track on a 3D globe with 10m National Elevation Dataset terrain and high-resolution Bing imagery. Videos taken during the trip are synchronized with the skier’s position and play along side of the animating visualization. Billboards are used to indicate the skier’s current position and the location of available videos. Camera flights provide smooth transitions to the next location when a user selects a new video to watch.

HTML5 Video

One of the great features in WebGL is the ability to interact with other web technologies, such as HTML5 video elements. By passing a loaded HTML5 video element as the source in a call to texSubImage2D, the current video frame is copied into a WebGL texture. We used this feature to create a DynamicVideoMaterial that can be used to texture various Cesium and CZML objects including polygons, ellipsoids, polylines, etc. through the Cesium Material system. With a little bit of timing logic, the video texture is kept in sync with the animating scene. The DynamicVideoMaterial is being developed in the Video Branch.

High Resolution Terrain

What good is a 3D scene of mountains if you don’t have accurate terrain information? Out of the box, Cesium has the ability to render streaming terrain information via the CesiumTerrainProvider. By using some Geospatial Data Abstraction Library (GDAL) tools, we converted high resolution terrain data from the National Elevation Dataset for use in Cesium. The data used was 1/3 arc second (~10 meters) elevation data covering the state of Utah. To generate the elevation tiles, we first used gdal_translate to convert the raster data to GeoTiff format and then used gdal2tiles to generate Tile Map Service compatible elevation tiles.

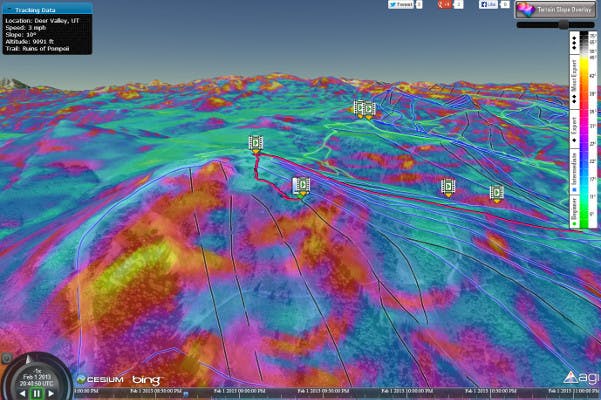

After working with the prototype application for a while, I realized that even the high resolution terrain didn’t easily convey all of the information that I wanted. Particularly the ability to easily identify very steep areas on the mountain. The solution to this was achieved by again using the NED elevation data. This time, instead of converting the data to elevation tiles, I converted the data to imagery tiles where pixel colors corresponded to the slope of the terrain. The new tiles were loaded into Cesium using a TileMapServiceImageryProvider and were rendered over the Bing imagery. To perform the conversion, I again used GDAL tools. First gdaldem slope was used to convert our elevation GeoTiff to a slope GeoTiff where each pixel value represents the slope (in degrees) of the terrain. A second call to gdaldem color-relief converted the slope GeoTiff to a slope-shade GeoTiff by mapping slope values to my user-defined colors. Finally, I used gdal2tiles again to generate a TMS tile pyramid.

CZML

One of the powerful features of Cesium is the ability to load and visualize time-dynamic data via CZML. I used various portions of the CZML Writer project to convert my data into CZML. Converting the GPS track data was the easiest part. The GPS app I used to collect the data saved all of the information in KML format using the Google gx extension. Using the CesiumLanguageConverter all of my GPS tracks were automatically converted to CZML Paths. Slightly more troublesome was generating CZML Markers that would indicate the georeferenced locations of where videos were captured. I could have manually searched through the GPS data to match the video time tags with GPS positions, but I had about 60 videos and days worth of GPS data. That process would have been extremely painful. Instead, I bulk loaded the video times and the GPS positions into a Microsoft Access database. By using a simple C# application I could then easily query the database to find the locations of all the videos. With this information available, I was able to use the C# czml-writer library directly to generate CZML for all of my video markers.

Metadata

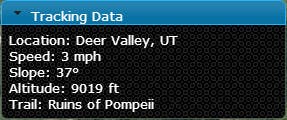

With the GPS track data loaded into PowderTracks I had the ability to extract additional “realtime” information about the skier. All of the data that I loaded via a CZML Path was available in the Javascript code within a DynamicPath object. By querying the position within a few seconds of the current animation time I was able to calculate instantaneous speed, altitude, and slope information. This information is presented to the user in a small windows in the top corner of the screen and updates dynamically as the scene animates.