SIGGRAPH 2018 Autonomous Driving Simulation and Visualization BOF

At SIGGRAPH we teamed up with Toyota Research Institute to co-organize the first ever Autonomous Driving Simulation and Visualization BOF. The room was packed, with more than 120 attendees. People from many areas and industries gathered to see how computer graphics and simulation techniques improve autonomous vehicle testing.

Patrick Cozzi from the Cesium team shared a demo video of Cesium’s autonomous car capabilities for simulation and visualization.

Vangelis Kokkevis of the Toyota Research Institute shared a video of their Cesium app. When they send a car on a test drive, it is equipped with multiple sensors collecting data such as LiDAR photogrammetry, point clouds, and object detection. The app integrates the data from all the sensor logs and visualizes so it can be streamed online.

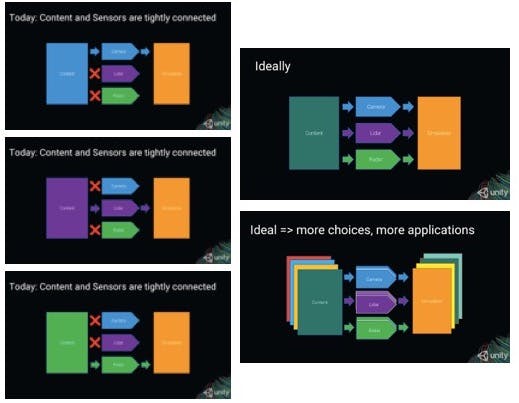

Jose De Oliveira of Unity spoke about their capture-based workflow and about better integrating disparate sensor types for improved simulations.

Magnus Wrenninge of 7D Labs spoke. 7D Labs is a startup focused on synthetic data creation with roots in computer graphics research and film/vfx production. They gave a preview of SynScapes, a photorealistic synthetic dataset for street scene parsing that will be released publicly in the near future.

Peter Fryscak and Steve Rotenberg came from VectorZero to talk about simulation software for autonomous cars.

Those are just some of the highlights from the BOF. We also heard from Hugh Reynolds of Uber; Yongjoon Lee of Zoox; and Felipe Codevilla of CARLA. There is a lot of exciting work in this area!

If you’re interested in what Cesium brings to simulation and visualization for autonomous cars, send us a note.